Behind the Root: Linux Filesystem Demystified

Introduction

If you've ever installed and tried out Linux after seeing a cool hacking sequence in a sci-fi movie, you know that opening it feels like stepping into a strange city with many roads named /bin, /home and /tmp. As beginners, we tend to ignore them and go on with our Linux usage by simply remembering the most commonly used commands.

While that approach works initially, it is good to take a step back and understand the Linux Filesystem properly. As "good" developers, who actually get their code out of localhost, we need to understand Linux to its core, because that is what drives the majority of web servers.

In this blog post, I have broken down the philosophy behind the Linux Filesystem, what all those randomly named directories actually do, and some cool insights along the way.

The Core Philosophy: Everything is a File

A fascinating thing about Linux is that everything, literally everything in Linux, is represented as a file in the Filesystem. This includes not just documents, but devices, processes, sockets, and even hardware!

To digest this idea properly, we need to change our perspective on what a "file" actually means. We usually think of a "file" as a document on our system. In Linux though, a "file" is just a stream of bytes with a path. That's it. Anything that can be read from or written to fits that definition.

Let's look at some examples of files as per this new definition:

| Path | Description |

|---|---|

/home/user/gta-cheat-codes.txt |

Just a simple file that the user has created |

/dev/input/... |

Your input devices like keyboard, mouse |

/dev/sda |

Literally your hard drive |

/proc/1234 |

A running process |

Since everything is a file, you can do powerful things like pipe data between programs, redirect output, and chain tools together. This is the exact reason why the Linux terminal is so powerful.

This core philosophy is what gives the Linux Filesystem its structure.

Filesystem Hierarchy Standard (FHS)

Before we dive deep into the directory structure, I want to mention that the Linux Filesystem isn't a random arrangement of resources in a tree-like structure. There is a specification called the "Filesystem Hierarchy Standard" that defines the directory structure and contents in Linux and other Unix-like operating systems. It is maintained by the Linux Foundation.

The Problem it Solves

Before FHS, different Unix distributions placed files wherever they wanted. A config file might live in /etc on one system and /usr/config on another. This made it a nightmare to:

Write portable software

Move between distributions

Predict where anything lives

FHS serves as a guideline, which most major distros like Debian, Fedora and Arch follow closely to maintain uniformity and predictability.

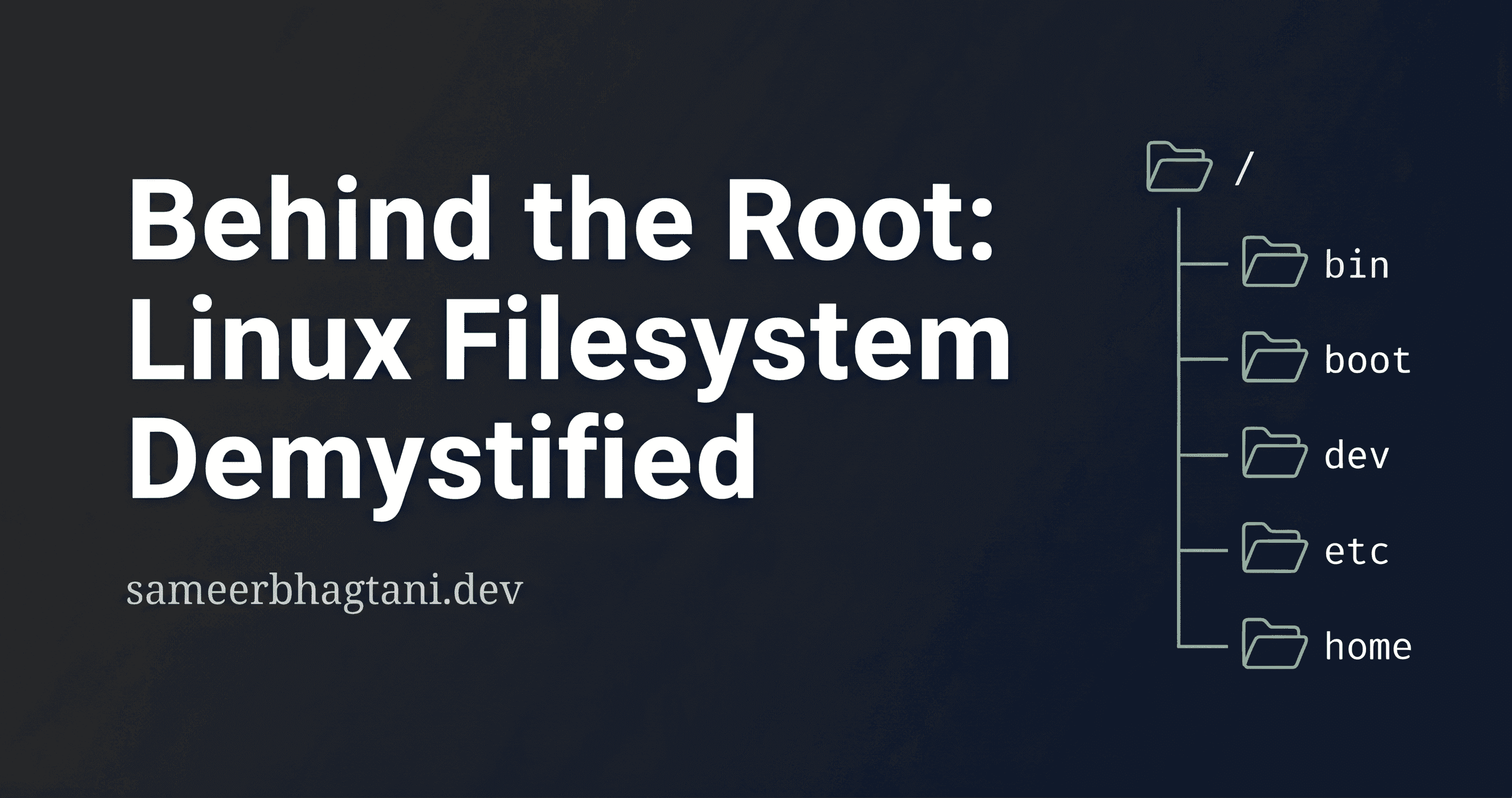

The Root Directory

The root directory is the top of the entire filesystem tree in Linux. It is represented by /. The reason it is called the "root" is because there is nothing above it. You can go back as much as you want but never beyond the "root".

If you're from the Windows ecosystem, you can think of this as your C:\. Everything, including files, devices, processes, and external drives, all mount within this same tree.

We can run the ls command at the root of our linux system, and would get the following directories:

/

├── bin -> Essential command binaries

├── boot -> Kernel and bootloader files

├── dev -> Device files to interface with hardware

├── etc -> Text-based configuration files

├── home -> Personal directories for each user

├── lib -> Shared library files used by binaries

├── media -> Mount point for removable media (USBs, CDs)

├── mnt -> Temporary mount point for filesystems

├── opt -> Optional, add-on software packages

├── proc -> Virtual filesystem tracking running processes

├── root -> Home directory for the root user

├── run -> Runtime data for processes since last boot

├── sbin -> System binaries for administrative use

├── srv -> Data served by the system (FTP, HTTP, etc.)

├── sys -> Virtual filesystem exposing kernel internals

├── tmp -> Temporary files, cleared on reboot

├── usr -> Secondary hierarchy for user installed software

└── var -> Variable data like logs, caches and spools

/etc: System Configuration Hub

I personally like to refer to it as the "Configuration Hub" of the entire system. The reason is that every major program, service, and system behavior has its config file here.

Moreover, it's all just plain text. This simple design choice makes configurations readable and easily editable. This is what makes Linux so customizable.

/etc

├── hostname -> The machine's host name

├── hosts -> Local DNS overrides

├── nsswitch.conf -> Controls the order of lookups

├── resolv.conf -> DNS server configuration

├── network/ -> Network interface configuration (Debian)

├── netplan/ -> Network interface configuration (Ubuntu)

├── passwd -> User account information

├── shadow -> Hashed user passwords

├── group -> Group memberships

├── sudoers -> Controls who can run commands as root

├── environment -> System-wide environment variables

├── profile -> Shell environment on login

└── systemd/

└── system/ -> Service unit files

Password Storage

Most people assume passwords are stored somewhere as plain text or in one file. Linux is much smarter than that. It splits user information across multiple files with specific jobs.

/etc/passwd

Despite the name, this file does not store passwords anymore. It stores user account information. Every line is one user, and it looks like this:

root:x:0:0:root:/root:/bin/bash

sameer:x:1000:1000:Sameer Bhagtani:/home/sameer:/bin/bash

Each colon-separated field means:

Username

Password field: just an

x, meaning "go look in/etc/shadow"UID: User ID, a unique number for the user

GID: Group ID, the user's primary group

GECOS: Full name or description

Home directory

Default shell

/etc/shadow

This is where the actual passwords live. Linux doesn't store them in plain text format. Instead, it hashes the password for security.

This file is only readable by root. A line looks like:

root:\(y\)j9T\(randomsalt\)hashedpasswordstring:20384::::::

Each colon-separated field means:

Username

Hashed password

Last password change: days since Jan 1, 1970

Minimum password age: days before password can be changed

Maximum password age: days before password must be changed

Warning period: days before expiry to warn the user

Remaining fields: inactivity period, expiry date, reserved

Groups

A group in Linux is simply a collection of users. Linux uses groups to manage permissions at scale. Instead of setting permissions for each user individually, you assign permissions to a group and add users to it.

For example, you have 5 developers who all need access to /var/www/. Instead of giving each of them individual access, you create a www-data group, give the group access to that folder, and add all 5 users to it.

All this information is stored in /etc/group, where a line looks like:

network:x:27:user1,user2

Each colon-separated field means:

Group name

Password: almost always x or empty, group passwords are rarely used

GID: Group ID

Members: comma-separated list of users in the group

Default Groups

Following are the meanings of some of the default groups in Linux:

| Group | What it gives you access to |

|---|---|

wheel |

sudo privileges |

audio |

Sound devices |

video |

GPU and display devices |

storage |

Mounting drives and storage devices |

network |

Managing network connections |

DNS Configuration

When you type google.com in your browser, your system needs to figure out the IP address behind it. This process is called DNS resolution. I have written a blog post about DNS resolution in depth, in case you're interested:

https://blog.sameerbhagtani.dev/dns-resolution-explained

Linux handles this in a specific order, and /etc controls that entire pipeline.

/etc/hosts

This is checked before any DNS server is even contacted. It's a simple static lookup table that maps hostnames to IP addresses manually. It looks like:

127.0.0.1 localhost

192.168.1.10 myserver.local

So if you add google.com here pointing to some other IP, your system will use that and never bother asking a DNS server. This is why it's called a local DNS override. Developers use this all the time to point a domain to their local machine for testing.

/etc/resolv.conf

This is where your DNS servers are defined. When /etc/hosts has no answer, your system goes here to find out which DNS server to ask. It looks like:

nameserver 8.8.8.8

nameserver 8.8.4.4

The above example shows Google's DNS severs. You can also use 1.1.1.1 which is Cloudflare's.

/etc/nsswitch.conf

This is the traffic controller of the whole resolution process. It defines the order in which your system looks things up. The relevant line looks like:

hosts: files dns

This tells the system: first check files (which means /etc/hosts), then go to dns (which means /etc/resolv.conf). This is why /etc/hosts always wins over DNS servers.

The full DNS resolution flow

Check

/etc/nsswitch.conffor the lookup orderCheck

/etc/hostsfor a static entryNo match? Go to

/etc/resolv.conffor the DNS serverAsk that DNS server for

google.comGet the IP back, connect

Network Interface Configuration

A network interface is your system's way of connecting to a network. It can be physical, like your ethernet port or WiFi card, or virtual, like a loopback interface. Each interface gets a name:

eth0orenp3s0: your ethernet cardwlan0orwlp2s0: your WiFi cardlo: the loopback interface, always127.0.0.1, used by your system to talk to itself

You can see all your interfaces with:

ip link show

The location of these network config files varies by distro, which is one of the few places where the FHS does not give you full uniformity.

For Debian and older Ubuntu systems, the file is located at /etc/network/interfaces:

auto eth0

iface eth0 inet dhcp

This says: bring up eth0 automatically on boot, and get its IP via DHCP.

Service Configurations

When you run systemctl start nginx or systemctl enable ssh, systemd needs to know:

What those services actually are?

How should they start?

What user should run them?

What happens if they crash?

All of this is defined in a unit file.

/etc/systemd/system/ is where your local unit files live. These are either custom services you created yourself, or overrides to the default unit files that came with installed packages.

What does a unit file look like?

Let's look at a simple example. Say you want to create a service that runs a Node.js app on boot:

[Unit]

Description=My Node.js App

After=network.target

[Service]

ExecStart=/usr/bin/node /home/sameer/app/index.js

Restart=always

User=sameer

Breaking down the two sections:

[Unit]: Metadata about the service.After=network.target: Don't start this service until the network is up.

[Service]: The actual behaviorExecStart: the command to runRestart=always: if the process crashes, restart it automaticallyUser: run the process as this user, not as root

Enabling vs Starting

There is an important distinction here that trips up a lot of beginners:

systemctl start nginx # starts the service right now

systemctl enable nginx # makes it start automatically on every boot

start is temporary. enable is permanent. You almost always want both:

systemctl enable --now nginx

The --now flag starts it immediately and enables it for future boots in one command.

Where do default unit files live then?

If /etc/systemd/system/ is for local configs, where do the unit files for packages like nginx or ssh live? Those ship to /usr/lib/systemd/system/.

The rule is simple: /etc/systemd/system/ always takes priority over /usr/lib/systemd/system/. So if you want to override how a package's service behaves without touching the original file, you drop your version in /etc/systemd/system/ and systemd will use that instead.

/proc: The Process Filesystem

/proc is one of those things that genuinely makes you stop and think. It looks like a directory full of files, but none of these files actually exist on your disk. There is nothing written to your SSD or HDD here.

/proc is a virtual filesystem that the kernel generates in memory, live, every time you look at it.

Think of it as a window into your running kernel. Whatever is happening on your system right now, the kernel is exposing it as readable files inside /proc. The moment a process starts, an entry appears. The moment it dies, it disappears.

You can verify this yourself:

ls /proc

You will see a bunch of numbered directories like 1, 423, 1089 and so on. Each number is a PID (Process ID), and each directory contains information about that running process. Open one up:

ls /proc/1

You will find files like status, cmdline, maps, fd and more, all describing exactly what that process is doing right now.

For example:

cat /proc/1/status

This will show you the name of the process, its state, memory usage, which user is running it, and more. All of that, served as a plain text file.

This is the "everything is a file" philosophy at its most elegant. The kernel is not a black box. It is constantly talking to you through /proc, and all you need to read it is cat.

Routing Tables

When your system wants to send a packet to some IP address, it does not just blindly throw it onto the network. It first consults a routing table, which is essentially a set of rules that answer one question: for this destination, which interface should I use and where should I forward the packet?

A simple way to see your routing table is:

ip route show

You will see something like:

default via 192.168.1.1 dev wlan0

192.168.1.0/24 dev wlan0 proto kernel scope link src 192.168.1.105

The first line says: for everything else, forward to

192.168.1.1(your router) viawlan0.The second line says: for anything in the

192.168.1.0/24range, you can reach it directly throughwlan0without going through a gateway.

Now, this same information is also exposed as a file inside /proc:

cat /proc/net/route

Iface Destination Gateway Flags RefCnt Use Metric Mask

wlan0 00000000 0101A8C0 0003 0 0 100 00000000

wlan0 0001A8C0 00000000 0001 0 0 100 00FFFFFF

This is the raw version of the same routing table, but in hex. The values for Destination, Gateway and Mask are in little endian hex format, which is why 0101A8C0 translates to 192.168.1.1. Not the most human readable format, which is exactly why tools like ip route exist on top of it.

The key insight here is that /proc/net/route is not a config file. You cannot edit it to change your routes. It is purely a read only window into what the kernel currently knows about routing, consistent with the whole philosophy of /proc.

System Logs

When something goes wrong on your system, the first place you go is /var/log. This is where Linux keeps logs for pretty much everything: the kernel, system services, applications, authentication events, and more.

ls /var/log

You will see a bunch of files and directories. Here are the important ones:

/var/log

├── syslog -> General system activity logs (Debian/Ubuntu)

├── auth.log -> Authentication events (logins, sudo usage)

├── kern.log -> Kernel messages

├── dmesg -> Boot time hardware detection logs

└── journal/ -> systemd's binary log store

Reading logs

For most log files, a simple cat or tail works:

tail -f /var/log/auth.log

The -f flag follows the file in real time, so you can watch events as they come in. Useful for monitoring login attempts or debugging a service.

For systemd based systems, the preferred way to read logs is through journalctl:

journalctl -u nginx # logs for a specific service

journalctl -f # follow logs in real time

journalctl --since today # logs from today only

What insights do logs actually give you?

A few practical examples of what you can catch from logs:

Someone repeatedly trying to SSH into your server with wrong passwords: visible in

auth.logA service that keeps crashing on boot: visible in

journalctl -u servicenameA kernel panic or hardware error: visible in

kern.logordmesgGeneral system misbehavior: visible in

syslog

File Descriptors

When a program opens a file, the kernel does not give it direct access to the file. Instead, it gives back a small integer called a file descriptor. This integer is just a reference that the program uses to interact with the file going forward.

Every process gets three file descriptors by default when it starts:

0 -> stdin (standard input)

1 -> stdout (standard output)

2 -> stderr (standard error)

When the program opens additional files, they get assigned the next available numbers: 3, 4, 5 and so on.

You can see the file descriptors of any running process through /proc:

ls /proc/<PID>/fd

This will show you every file, socket, pipe or device that process currently has open, each represented as a numbered symlink.

Everything is a File Descriptor

This is where the "everything is a file" philosophy gets very practical. In Linux, file descriptors are not just for files on disk. They represent:

Regular files

Directories

Network sockets

Pipes between processes

Device files in

/dev

When your Node.js server accepts an incoming HTTP connection, the kernel hands it a file descriptor for that socket. The server reads from it and writes to it just like a regular file. Same interface, whether it is a text file or a network connection.

The Default Limit and Why it Matters

The kernel puts a limit on how many file descriptors a single process can have open at once. You can check yours with:

ulimit -n

On most systems this defaults to 1024. This means a single process can have at most 1024 files, sockets, or connections open at the same time.

For a simple script or a CLI tool, 1024 is more than enough. But for a web server or a WebSocket server, this becomes a real problem fast.

The WebSocket Problem

A WebSocket server maintains a persistent open connection with every connected client. Each connection is a file descriptor. So if you have 1024 concurrent users connected to your WebSocket server, you have hit the limit. The 1025th user cannot connect. The kernel will throw a Too many open files error and your server will start rejecting connections.

This is not a bug in your code. It is a system level limit you need to raise.

Raising the Limit

There are two limits to be aware of:

ulimit -n # soft limit, applies to your current session

To raise it permanently, you edit /etc/security/limits.conf:

* soft nofile 65536

* hard nofile 65536

The * applies to all users. nofile stands for number of open files. 65536 is a common production value for web servers.

For systemd managed services, you set it directly in the unit file instead:

[Service]

LimitNOFILE=65536

After making these changes, restart the service and verify:

cat /proc/<PID>/limits

This is one of the first things you tune when deploying a WebSocket server or any high concurrency application in production. A server with default limits will quietly start failing under load, and the logs will tell you exactly why.

Conclusion

In the end, the Linux filesystem is far more than a collection of folders. It reflects a philosophy of simplicity, consistency, and treating system resources through one unified interface.

Once you understand what lives where, Linux stops feeling mysterious and starts feeling logical. And as developers, that understanding gives us a real edge when working with servers, debugging production systems, or simply feeling more at home inside the machines that run the modern web.